Security as a Strategic Business Enabler: Reframing the Cost Center

Discover how modern security systems are evolving beyond risk mitigation to drive operational efficiency, workplace insights...

Data centers are the high-stakes, mission critical infrastructure of the digital age. They are growing exponentially in complexity, driven by the intense demands of modern AI and hyperscale workloads. Yet, the industry’s design process remains rooted in the traditional Architecture, Engineering, and Construction (AEC) workflow: a slow, change-averse process where systems are manually drawn, element by element.

This mismatch between digital complexity and analog design methodology is creating significant risk and cost for owners and operators.

What if building design worked more like software development? What if every design decision could be version-controlled, automatically validated, and regenerated on demand, the way code is? By moving from manually drawn systems to programmatically defined ones, the industry can automate, improve precision, and enable continuous validation, transforming how these complex facilities are conceived, coordinated, and delivered.

The core challenge in modern data center design is the staggering, increasing complexity, particularly in technology and connectivity systems.

The sheer density of modern data center networking requires a huge number of connections between racks. For hyperscalers, this means relying on pre-terminated fiber optic cabling manufactured off-site using new, high-density connectors like MMC. The scale is so massive that field termination is impossible.

These design-time decisions present a huge operation-time risk: a change that is not appropriately tracked can lead to the manufacturing of a whole facility’s worth of fiber optic cables that must be thrown out because a change to the equipment layout changed the cable lengths. A single, unvalidated design change can turn millions of dollars of custom cable into scrap, resulting in project delays and significant added cost. This problem is exacerbated by the push to interconnect entire buildings, campuses, and regions with scale-out and scale-across networks for training AI models across hundreds of thousands of accelerators.

For clients building clusters and campuses that support AI training runs, which can cost millions and run continuously for days or weeks, design correctness is not just about construction cost; it’s about operational performance.

A flaky connection, a slight deviation from the specified bend radius or cabling distance, or a power capacity limit that wasn’t properly checked can lead to degraded performance in a compute cluster. The construction design choices can have an extreme impact on the long-term operational capabilities of the facility. The client’s risk is not just project delay, but compromised revenue and mission failure if design specifications are not validated for every design decision. This attention to detail across disciplines in a complex building project is simply infeasible with CAD and BIM.

Traditional design relies on human designers manually coordinating conflicting systems, including MEP, structural, and technology. The complexity of today’s facilities is outstripping the capabilities of traditional Building Information Modeling (BIM) tools. The software your local architect uses to design a home is often the same used for a multi-million-dollar hyperscale data center.

The result is a design process that is simply not equipped to handle the level of complexity of data center projects. Issues are detected late, usually during coordination meetings, weeks after the design error was introduced. This forces costly rework and delay. Alternatively, data center operators employ huge teams to take design and review work in-house, consuming the time of professionals who could be pushing the boundaries of the organization’s core competency.

The solution to managing this immense complexity is to adopt the mindset and methodology of large-scale software development. The current AEC model is averse to change, seeing scope adjustments as change orders and treating building information models as precious objects that are created by hand. Software, on the other hand, is iterative and embraces change, where every keystroke can be easily captured, version controlled, and audited.

Consider an analogy to illustrate the shift: It’s the difference between sculpting a building (manually chipping away at a block of marble) and 3D printing a building (programming the printer and letting it churn out the design).

In software, a CI/CD is an automated process where the entire code base is compiled and tested automatically against a full suite of requirements. It can be on a set schedule or in response to every change. This approach guarantees correctness in response to changes to the codebase.

In a code-driven design workflow, the core design logic (fiber optic specs, power limitations, clearances, connectivity patterns) is encoded in algorithms. The continuous integration and continuous deployment is the automatic process where the entire building model is:

This system acts as continuous validation, ensuring design intent is preserved and conflicts are caught automatically and immediately.

Building automated checks for correctness into the process from the start delivers immediate, tangible benefits for data center owners and developers.

The most significant benefit is shifting error detection from the late-stage field or coordination meeting to the automated build system. This model ensures that issues are identified earlier, preventing the team from waiting until a formal validation review or value engineering meeting to discover a fundamental design conflict.

With technology systems advancing rapidly, particularly with AI workloads, the idea of freezing a design doesn’t work. The AEC process needs to embrace change.

CI/CD for buildings enable flexibility. If the architectural background changes, or if the client updates the hardware generation of the GPUs that they are installing, the design code is updated, and the entire system re-generates accurately and instantly around the new constraints. This dramatically cuts iteration time, allowing teams to explore design options and adapt to technological advancements rapidly.

By defining system patterns in code (e.g., place this rack type, connect it to this PDU, and route all connections along the shortest, compliant path), a single, succinct definition can generate hundreds or thousands of detailed elements.

This algorithmic generation allows the design process to handle the exponential complexity of data centers. The precision that used to be pushed out to the field is now pulled up into the design process, ensuring every single connection and component is precisely defined and validated.

Adopting a software-style workflow requires a cultural and conceptual shift within the AEC industry. The key conceptual leap is for designers and engineers to view their design intent abstractly, as an algorithm, not just as a 3D model.

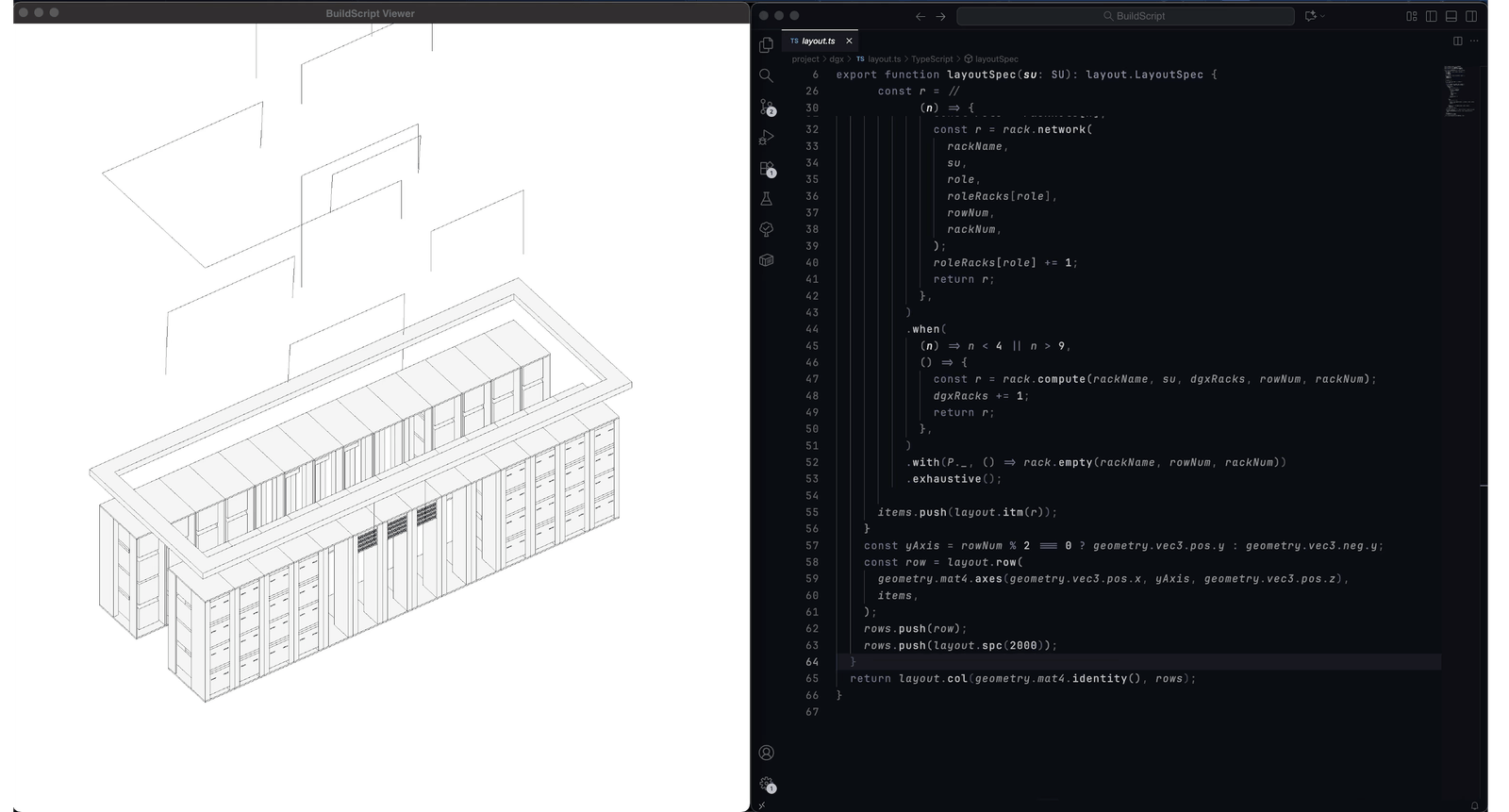

This is exactly the shift TEECOM has been engineering. Our Buildings as Code™ methodology puts these principles into practice by encoding design intent as algorithms rather than manual models, with continuous validation built into every step. BuildScript™ is the tool that makes it concrete: a language purpose-built for engineers to describe connectivity between technology infrastructure systems in the data center domain. Design intent encoded with BuildScript can generate any kind of deliverable to assist with design, modeling, and deployments of the most advanced data center facilities.

The complexity of modern data centers demands a new process. By embracing the Buildings as Code paradigm and implementing a system for guaranteeing correctness, we’re not just improving the workflow; we’re establishing the foundation for a future-proof, high-precision, and hyper-efficient method of delivering mission-critical facilities. To learn more about how Buildings as Code can optimize your next mission-critical project, contact us today.

Alex Serriere is TEECOM’s Principal, Executive Vice President of R&D. Alex has spent over 15 years driving TEECOM’s commitment to innovation and future-proofing client solutions. His research and leadership ensure the team remains current on the latest technology trends and maximizes long-term flexibility for mission-critical facilities. Today, Alex’s team focuses on developing the advanced tools required to manage the complexity of the world’s most sophisticated buildings, including the Buildings as Code™ methodology.

Tyler Kvochick is TEECOM’s Director of Research. He focuses on planning, developing, and testing new approaches to system design that enhance quality and efficiency in the AEC industry. With a master’s degree in architecture and extensive experience as a software developer in construction technology, Tyler possesses a unique cross-disciplinary background. He is driven to create sophisticated yet practical tools, like Buildings as Code, that help eliminate errors, information loss, and ambiguity in complex facility projects.

Stay ahead of the curve with our latest blog posts on industry trends, thought leadership, employee stories, and expert insights.